Customer value is delivered at point-of-sale, not point-of-plan.

—Jim Highsmith, Agile Project Management [1]

Enterprise Solution Delivery

Enterprise Solution Delivery (ESD) is one of the seven core competencies for Business Agility, each of which is essential to achieving it. Each competency is supported by a specific assessment, which enables the enterprise to assess its proficiency. The Measure and Grow article provides these core competency assessments and recommended improvement opportunities.

Why Enterprise Solution Delivery?

Building and evolving large enterprise solutions is a monumental effort. These systems require hundreds or thousands of engineers and are subject to significant regulatory and compliance constraints. Large software systems may host complex user journeys and experiences that cross multiple products and lines of business. Cyber-physical systems require a broad range of engineering disciplines and utilize hardware and other long lead-time items. As such, they demand sophisticated, rigorous practices for engineering, operations, and evolution.

NOTE: See the Applying SAFe to Hardware Development article for more details on applying SAFe’s Lean-Agile practices to the hardware domain.

While maintaining the same levels of quality and compliance, enterprises must now deliver solutions faster than ever. Many large solution builders apply the well-known ‘V’ model [2] that encourages large batches of specification and design activities with handoffs between them. Unfortunately, this slows development and delays feedback. When building systems with significant technical, user, and market uncertainty, delayed feedback often results in missed deadlines, cost overruns, and poor business outcomes.

Adopt SAFe’s Lean-Agile Mindset, Values, and Principles

Competing and thriving in the digital age requires a different approach to building large solutions. But transitioning to a new, foreign development process can appear daunting and risky. SAFe’s mindset, values, and principles provide the foundation for the new way of working and an enhanced company culture that enables faster, more reliable delivery with higher customer satisfaction and employee engagement. Together, they guide organizations on their journey toward Business Agility:

- A Lean-Agile Mindset ensures leaders and practitioners embrace the underlying values and principles when applying the SAFe and ESD practices. Lean Thinking shifts development from a traditional, large batch-and-queue system to continuous flow. Agile adopts an iterative development approach focused on fast feedback and learning. Collectively, the values and principles of Lean Thinking and Agile form the DNA of everything within SAFe.

- Belief in SAFe’s Core Values of alignment, transparency, respect for people, and relentless improvement must be deeply held and exhibited by everyone in the enterprise. These tenets help guide the behaviors and actions of everyone participating in the new way of working and are foundational to supporting the new way of working.

- Ten underlying Lean-Agile Principles inform all the roles, artifacts, and practices in SAFe and influence everyone’s behavior and decision-making. Understanding their meaning and purpose is critical as organizations apply and tailor SAFe to their specific context. While not every SAFe practice applies similarly in every circumstance, the principles guide practitioners towards the goal of Lean: “shortest sustainable lead time, with best quality and value to people and society.” [3]

Five SAFe Principles particularly influence large solutions builder’s adoption of ESD practices, and they are summarized below:

- Principle #3, Assume variability; preserve options addresses the inherent uncertainty in large solution development and encourages practitioners to keep options open by applying Set-Based Design and a fixed-variable Solution Intent.

- Principle #4, Build incrementally with fast, integrated learning cycles recognizes that new knowledge reduces uncertainty instead of highly-detailed plans and specifications. Quickly building, integrating, and, when possible, deploying new system functionality provides the technical, user, and operational feedback needed to learn and adjust.

- Principle #5, Base milestones on objective evaluation of working systems encourages solution builders to evaluate progress based on objective, demonstrable evidence rather than proxies like traditional phase-gate milestones.

- Principle #9, Decentralize decision-making reduces the delays inherent in escalating decisions that can dramatically slow productivity and decision quality at large scale, leading to delayed feedback and limiting innovation.

- Principle #10, Organize around value reduces the functional silos that encourage large work batches, handoffs, and delays and creates optimal Agile Teams and Development Value Streams that can deliver value faster, more predictably, and with higher quality.

Ten Practices for Enterprise Solution Delivery

The ESD competency describes ten best practices for applying Lean-Agile development to build and advance some of the world’s most important solutions. The three dimensions in Figure 1 group these ten practices.

Lean Systems Engineering applies Lean-Agile practices to align and coordinate all the activities necessary to specify, architect, design, implement, test, deploy, evolve, and ultimately decommission these systems. These practices are:

- Specify the solution incrementally

- Apply multiple planning horizons

- Design for change

- Frequently integrate the end-to-end solution

- Continually address compliance concerns

Coordinating Trains and Suppliers manages and aligns the extended and often complex set of value streams to a shared business and technology mission. These practices are:

- Use Solution Trains to build large solutions

- Manage the supply chain

Continually Evolve Live Systems ensures large solutions and their development pipeline support continuous delivery. These practices are:

- Build an end-to-end Continuous Delivery Pipeline (CDP)

- Evolve deployed systems

- Actively manage artificial intelligence/machine learning systems

The remaining sections describe these ten practices.

Specify the Solution Incrementally

Large solution builders use specifications to manage requirements and designs, communicate them to system builders, and support compliance. The common specification process follows the ‘V’ model (left side of Figure 2), which typically results in large batches of upfront specification work that delay implementation and feedback. While all the ‘V’ model activities (e.g., specifying requirements) are still critically important to solution development, in SAFe, engineers perform them concurrently and in smaller batches.

To support frequent change, Solution Managers and Architects use the Solution Intent, Backlogs, and Roadmaps. Solution intent communicates the solution’s current requirements and design decisions. Some are fixed and known early (see 100 Shall statements Figure 2 below). Others may vary (see many questions) and become fixed over time as teams explore alternatives (Set-Based Design) to find optimal implementations. Backlogs and roadmaps manage the work that helps reduce uncertainty and build the solution as assumptions are validated.

By managing and communicating a more flexible approach to the system’s current and intended structure and behavior, the Solution Intent aligns all solution builders to a shared direction. Its companion, Solution Context, defines the system’s deployment, environmental, and operational constraints. Together, they align teams and provide the necessary information for compliance. Teams use the Solution Intent to drive their backlogs and localized decision-making, as shown in Figure 3. In Agile development, backlogs and face-to-face conversations replace part of the traditional, detailed requirements hierarchies.

At scale, the Solution Intent and Context are connected across the supply chain (Figure 4). While some decisions are fixed early, others vary

As downstream subsystem and component teams implement decisions, the knowledge gained from their Continuous Exploration (CE), Integration (CI), and (where possible) Deployment (CD) efforts provide insights back upstream, moving decisions from variable to fixed.

Apply Multiple Planning Horizons

Fixed, detailed plans are difficult to adjust because they discourage the quick response to change required when building innovative solutions. Instead, Agile practitioners replace detailed schedules with Roadmaps to manage and forecast work and quickly adjust them as new facts emerge. Multiple planning horizons provide the proper separation between those responsible for setting the solution’s longer-term vision and milestones while allowing the ARTs and teams who build the solution to detail and plan their work.

Figure 5 shows how multiple horizons of planning apply to Solution Trains. A multi-year solution roadmap of milestones and epics informs the PI Roadmaps that decompose nearer-term epics into Features and Capabilities. Multiple planning horizons allow the people closest to the work to create their own plans, guided by the larger plan for the overall solution.

Design for Change

Architectural decisions are critical economic choices because they significantly impact the effort and cost required for future changes. Loosely coupled architectures are the strongest predictor of continuous delivery [4]. A good architectural design enables ARTs and teams to independently develop and release ‘Value Streamlets’ (solution components within a larger value stream), as shown in Figure 6. The components are described below:

- The Routing and Scheduling components are allocated to cloud services and support continuous updates

- The Mobile Delivery Application is also software-based and supports continuous delivery

- The Vehicle Control and Navigation components run on programmable hardware (CPUs and FPGAs) in the vehicle and can be updated over-the-air

- Hardware sensors are mounted on the chassis and are rarely updated because they require taking the vehicle out of service and possible recertification

Solution designs must balance the needs of operations and development. Design choices often optimize for operations to reduce unit, manufacturing, and delivery costs without fully understanding the total costs of delayed value delivery. For example, due to their lower unit costs and power consumption, many automotive control systems use custom, application-specific integrated circuits (ASIC) electronic parts instead of programmable ones (CPUs, FPGAs). However, the logic is fixed during ASIC manufacturing and cannot be reprogrammed, significantly increasing the cost of changes. Optimal designs balance both concerns, as shown in Figure 7.

Frequently Integrate the End-to-End System

Specifying and building the solution in smaller batches enables developers to integrate the end-to-end system more frequently. Frequent integration provides faster learning on the technical, user, and market assumptions and risks inherent in large solution development.

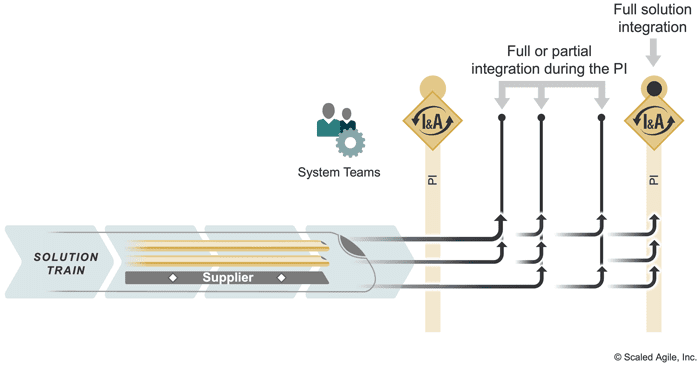

Many practices support frequent integration. Applying a common cadence across all system builders and using Solution Demos as ‘pull events’ [5] ensures the entire system is learning, not only the individual components (see SAFe Principle #4, Build incrementally with fast, integrated learning cycles). Built-in Quality practices foster frequent integration for all component types, including software, hardware, IT systems, and cyber-physical systems. A System Team often provides the unique knowledge and skills necessary to integrate the end-to-end solution and reduce the cognitive load on the ARTs and other teams. (Figure 8).

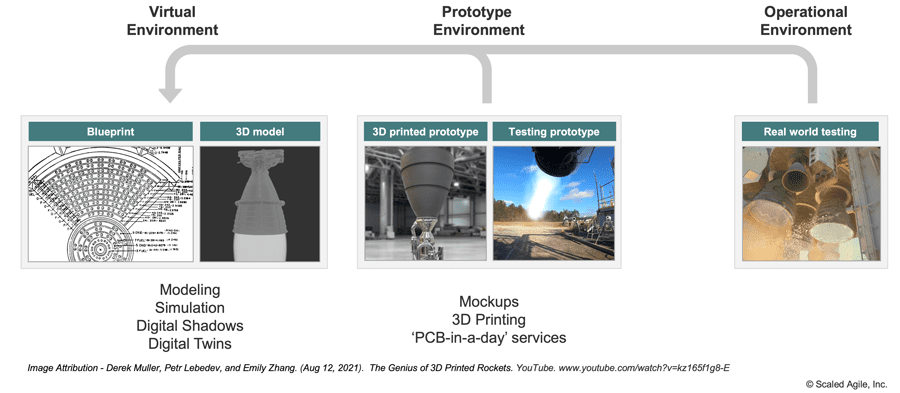

When full integration is impractical, partial integration lessens risks. Through partial integration (Figure 9), Agile teams and trains integrate and test in a smaller context using virtual/emulated environments, mockups, simulations, and other models described below. Early integration often leverages proxies. For example, significant IT components can be proxied with test doubles, and cyber-physical components can leverage prototypes, development kits, and breadboards.

Manufacturing costs and other constraints of long-lead-time items impact the economics of frequently creating and integrating new parts for cyber-physical systems. Fortunately, virtual modeling and rapid prototyping innovations provide environments for faster learning. Modeling and simulation innovations for Digital Shadows and Digital Twins (see MBSE) enable early learning and decision-making. And in situations requiring physical parts for feedback, rapid manufacturing service providers (PCB-In-A-Day) can quickly produce electrical, mechanical, and other physical components (Figure 10). See [6] to hear how one company’s approach is reducing cycle time and cost by a factor of 10 to accelerate learning.

Continually Address Compliance Concerns

Typically, any large solution failure has unacceptable social or economic costs. To protect public safety, avoid lawsuits and prevent unwanted press coverage, these systems must undergo routine regulatory oversight and satisfy various compliance requirements.

Organizations rely on quality management systems (QMS) to dictate practices and procedures and confirm safety and efficacy. However, most QMS systems were created before Lean-Agile development and, therefore, were based on the conventional approaches that often assumed (or even mandated) early commitment to:

- Unvalidated specifications and design decisions

- Detailed work breakdown structures

- Document-centric, phase-gate milestones

In contrast, a Lean QMS makes compliance activities part of the regular flow of value delivery (Figure 11). For more information on Agile compliance, see the SAFe Extended Guidance article Achieving Regulatory and Industry Standard Compliance with SAFe.

Use Solution Trains to Build Large Solutions

Ultimately, it’s people who build systems. SAFe ARTs and Solution Trains define proven structures, patterns, and practices that coordinate and align the many developers and engineers who define, build, validate, and deploy large solutions. ARTs are optimized to align and coordinate significant groups of individuals (50-125 people) into a team of Agile teams—Solution Trains that scale ARTs to build very large solutions with hundreds of developers and suppliers (Figure 12).

As solutions scale, alignment becomes critical. Delays in ARTs and teams accumulate, leading to missed deadlines and sizable cost overruns. Solution Trains align ARTs through Coordinate and Deliver practices. To validate customer, business, and technical assumptions, they integrate the end-to-end solution at least every PI to validate customer, business, and technical assumptions. Teams and ARTs building components with longer lead times (e.g., packaged applications, hardware) still deliver incrementally through proxies (stubs, mockups, prototypes, and models), integrating with the overall solution and supporting early validation and learning.

Manage the Supply Chain

Most large solution development efforts include internal and external Suppliers who bring unique expertise and existing solutions to accelerate delivery. Critical to solution success, these strategic partners should operate like an ART by participating in SAFe events (planning, demos, I&A), using backlogs and roadmaps, and adjusting to changes. Agile Contracts encourage collaboration.

To operate like an ART, suppliers must utilize ART-like roles. The supplier’s Product Manager and Architect continuously align backlogs, roadmaps, and architectural runways with the overall solution. Similarly, System Teams from both organizations share scripts and context to minimize errors and delays with integration handoffs.

Supply chains can become complicated. Figure 13 shows an automotive supply chain where a solution in one context is part of a large solution in another. To ensure alignment, all Product Managers must continually synchronize their roadmaps to maximize each PI’s value, adjusting together as new facts emerge.

There are known patterns for coordinating suppliers who deliver to multiple customers. Figure 13 shows three approaches for the Autonomous driving solution from Figure 14 to support its three customers. ‘Clone-and-own,’ a common technique, creates a custom solution for each customer. Although copying prior solutions can speed delivery, it prevents economies of scale, often raises costs, and lowers quality.

A ‘platform’ approach treats the solution like a product, with a vision and roadmap. To create a single solution (or product line of solution variants) for multiple customers, all solution builders work from one value stream. The ‘internal open-source model’ blends the two by embedding Enabling Teams (see Topologies in Agile Teams) from the platform inside the customer value stream or approving individuals to make changes and commit them back into the platform. This way, dependent value streams can meet their delivery needs while maintaining the benefits of a platform.

Build an End-to-End Continuous Delivery Pipeline

Continuous integration is the heartbeat of continuous delivery — the forcing function that verifies changes and validates assumptions across the entire system. Agile software teams invest in automation and infrastructure that frequently builds, integrates, and tests small developer changes, as shown in the software portion of Figure 15 below.

Large solutions are far more challenging to integrate continuously because:

- Long lead-time items may not be available

- Integration spans multiple system organizational boundaries

- Automation is rarely feasible end-to-end

- The laws of physics dictate limits for integrating physical system elements

Still, the Agile goal is to verify and validate changes quickly for fast feedback. Different components will utilize different Continuous Delivery Pipelines (CDP), as shown in Figure 15. To support faster learning for cyber-physical components, a hardware CDP uses the digital engineering and rapid prototyping techniques discussed earlier in the frequent integration section.

Many technologies enable the pipelines. While the software technologies are well-known and becoming standardized, the hardware community is just beginning to leverage emerging hardware technologies (Figure 16).

Evolve Deployed Systems

Because large solutions deliver significant value for many decades, they require continuous investment to support changing technology and business needs. A typical project-based approach to development invests in the initial system development but requires separate ‘modernization efforts’ to update them. A product-based approach to development recognizes solutions evolve continuously and funds a Development Value Stream that continually flows value to customers.

Leaders define a product vision and roadmap to accelerate time to market and simultaneously build the solution and the CDP infrastructure necessary to evolve it (Figure 17). Over their lifecycle, products follow the typical S-curve adoption (see Solution), with innovation and Design Thinking occurring throughout to evolve the solution. A fast, economical delivery pipeline allows organizations to release a minimum viable solution early and advance it later.

Actively Manage Artificial Intelligence/Machine Learning systems

Practices in AI, machine learning (ML), and data science are rapidly maturing and being used onboard solutions to control behavioral logic and offline to improve performance and delivery. The entire enterprise also uses the data generated by these large solutions to gain business insights. Solution builders have four critical considerations when applying AI and ML to these solutions:

- Telemetry – Build telemetry into the solution and the operational infrastructure to collect critical information about the system, its users, and the operating environment. Large solutions can provide data vital to improving products, optimizing operations, and understanding customers and markets better (see Big Data for more information).

- Data Management – Large solutions generate massive volumes of data at high velocities. Solution Architects collaborate with data scientists to determine what data to store, where to store it (onboard or offline), and for what duration.

- Design for AI and ML – AI/ML algorithms are replacing humans and fixed code logic to drive solution decision-making and behavior. However, these algorithms require massive datasets and significant computing power. Solution designs for AI/ML must, therefore, balance where to put the power and algorithm logic:

-

- Onboard the solution close to the data but with expensive computing resources or

- Offline with limited data and slower response times but access to massive, cheap computing resources

- Tune models – Unlike traditional code with fixed logic, AI/ML algorithms change their behavior over time. Algorithms change themselves with data sets that grow during operations. These applications, and the models they are built on, require manual supervision to ensure they do not drift from intent. Data scientists must monitor algorithms for their predictive quality and retrain models when they drop below a certain threshold.

Accelerating Flow of Large Solution Delivery

As explained by Principle #6 – Make value flow without interruptions, SAFe is a flow-based system that promotes fast, efficient value delivery. Flow occurs when Agile teams, ARTs, and the portfolio can deliver high-quality products and services with minimal delay.

Improving flow is critical in large solution development to reduce waste and accelerate delivery for these significant investments. Bottlenecks, impediments, and redundant activities are continuously detected and remediated at all levels of SAFe to accelerate flow throughout the value stream. The ten ESD practices identify common inefficiencies that the flow accelerators described in the Solution Train Flow article can help identify and correct.

Summary

Agile development has shown the benefits of delivering early and often to generate frequent feedback and develop solutions that delight customers. Organizations must apply the same approach to larger and more complicated systems to stay competitive. ESD builds on the advances in Lean systems engineering and emerging technologies to provide a more flexible approach to the development, deployment, and operation of large solutions.

ESD also describes the necessary adaptations to create a CDP in a cyber-physical environment by leveraging simulation and virtualization. This competency also provides strategies for maintaining and updating these true ‘living systems’ to extend their life and continually deliver higher value to end-users.

Learn More

[1] Highsmith, Jim. Agile Project Management: Creating Innovative Products. Addison-Wesley Professional, 2009. [2] System Lifecycle Process Models: VEE. SEBoK, 2023. Retrieved October 11, 2023, from https://www.sebokwiki.org/wiki/System_Life_Cycle_Process_Models:_Vee [3] Larman, Craig, and Bas Vodde. Scaling Lean & Agile Development: Thinking and Organizational Tools for Large-Scale Scrum. Addison-Wesley Professional, 2008. [4] 2017 State of DevOps Report. https://www.puppet.com/resources/history-of-devops-reports#2017 [5] Oosterwal, Dantar P. The Lean Machine: How Harley-Davidson Drove Top-Line Growth and Profitability with Revolutionary Lean Product Development. Amacom, 2010. [6] The Genius of 3D Printed Rockets. Retrieved October 11, 2023, from https://www.youtube.com/watch?v=kz165f1g8-E&t=479sWard, Allen C., and Durward K. Sobek II. Lean Product and Process Development. Lean Enterprise Institute, 2014.

Reinertsen, Donald G. The Principles of Product Development Flow: Second Generation Lean Product Development. Celeritas Publishing, 2009.

Last Update: 11 October 2023